6 rules to perform realistic load testing

Performance testing encompasses a wide range of goals and ways of achieving them. Today, we will compare two major techniques of software performance testing and explore how the most effective one is conducted.

To check the load the system can withstand under certain conditions, one of two methods can be used:

- Implementation of online services to generate statistical load on certain pages of the application.

- Simulation of real user’s actions by launching the developed load scripts in several parallel threads (or “user behavior method”).

The first method is easier to implement though it has several disadvantages. The requests created by performance testing online services are unpredictable in their intensity.

Online services do not consider some peculiarities of the user behavior, for example, dynamic parameters correct transmission, browser data caching emulation, correct time delays between the actions taken by a virtual user.

The load applied by such services is closer to a DDoS-attack rather than to an efficient performance testing. Quick testing, which does not correlate with the real situation, often turns out to be useless and even counterproductive, as the results of such tests may be unreliable and erroneous. It may cause reputational and financial risks.

The user behavior method gives precise results, helps define bottlenecks in the system’s work and eliminate them in time.

To obtain the results representing the actual system performance, you should reproduce user actions as accurately as possible. Virtual users can help reach the actual system performance load.

The virtual user is a script that makes requests to an application, mimicking the actions of a real user.

The high precision is achieved with the help of the request flow design. The request flow resembles the requests observed in the application production environment. Virtual users also interact with the system using a behavioral model.

Let’s discuss six rules and recommendations to apply the load on the system using the behavioral method:

1. User behavior scripts design

Before getting down to load scripts design, QA engineer should develop the most realistic patterns of virtual user behavior. To do this, it is necessary to answer a number of questions:

- What is the total number of the users working with the application (an average number per hour, day, month)?

- How many users work with the application at the moment of the peak load?

- What actions do users perform and how often?

- How are the roles in the system distributed among the users?

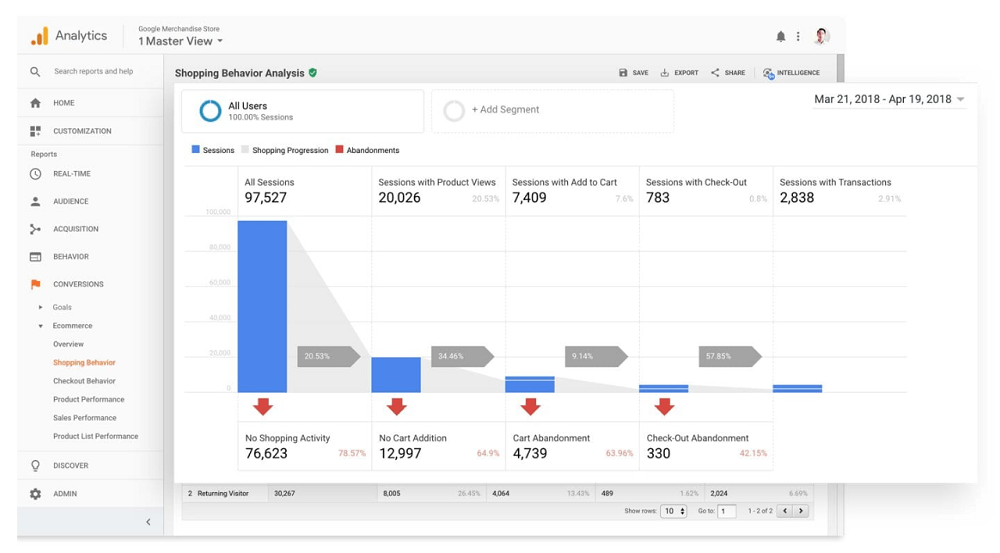

The answers can be obtained from the statistical data collected by web analytics tools, such as Google Analytics, Piwik, Adobe Analytics, etc. Transactions frequency analysis allows finding the key business transactions and design user behavior scenarios that will be used for load scripts development.

When the application is in the pre-release stage, test scripts can be created to check the main business processes and major functional modules. This information can be obtained from both the business analyst and the customer. The scripts can also include rare but heavy requests pointed out by the development team. Among such requests can be, for example, those processing information from many database tables and, therefore, applying a significant load on the system.

Besides determining the sequence of real user actions, the QA performance engineer studies the statistical distribution of time delays between transactions or the think time.

During the think time, the user does not perform any actions, but he or she is in the process of executing them or thinks over the next step (for example, enters a password, chooses goods, reads a text, writes a comment, etc). The time delay for the authorization transaction may take up to ten seconds, the website menu navigation or search process does not exceed five seconds. These delays must always be taken into consideration during the scripts’ development.

A test script for an online shop is given below as an example. The delay time is indicated in brackets.

The script “Authorized user: order payment”

- A user opens the home page (5-10 seconds)

- The user clicks “Login for registered users” (5-10 seconds)

- Fills in the login form and clicks “Login” (10-60 seconds)

- Chooses a good and clicks “Add to order” (30-60 seconds)

- Clicks “Continue placing the order” (10-20 seconds)

- Steps 4-5 are repeated a random number of times (from 1 to 5)

- Clicks “Place the order” (10-20 seconds)

- Clicks “Pay the order” (10-20 seconds)

- Fills in the payment form and clicks “Pay” (10-20 seconds)

- Clicks “Confirm payment” (10-20 seconds)

The number of developed scripts depends on the size of the application. As a rule, from 3 to 10 scripts are created. Each script contains up to 10 steps describing user actions.

Сomplex applications require more scripts. For example, a portal dedicated to facility building services, including repair works assignment, certificate and allowance creation, and many other functions required more than 20 test scripts written by the a1qa engineers. Each of the scripts included 15 to 20 steps.

Request a free consultation with the a1qa engineers.

2. Load profile design

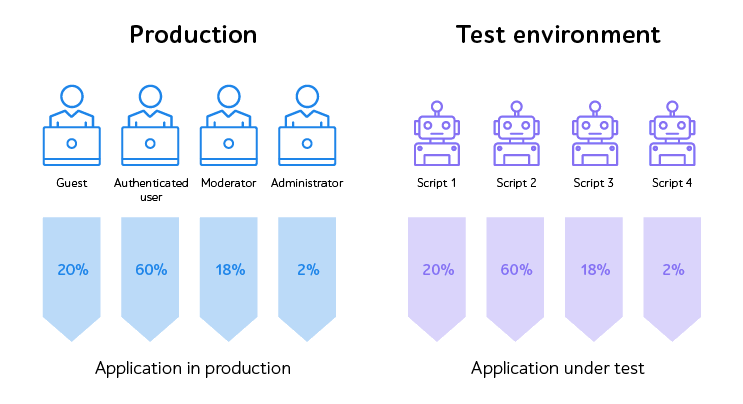

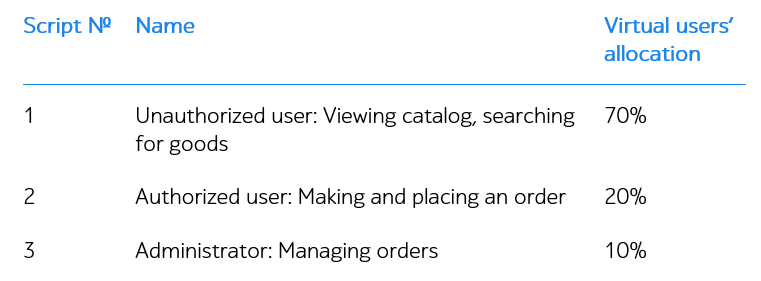

A load profile is also created at the scripts’ development stage. The load profile shows how the transactions performed in the system are distributed among the users of different roles.

The load profile can also be created with the help of page traffic statistics for real users. Apparently, a user does not visit all the pages. For example, when he or she adds a good to the chart in an online shop, there is always a chance that the user will not get beyond the order placing page. Each action has a specified degree of possibility and is performed only by a certain percentage of users. The following ration was observed during the testing of the online shop:

Therefore, under the load of 1,000 users, 700 of them will be executing Script 1, 200 – Script 2, and 100 – Script 3.

The prepared load profile allows avoiding applying more load than it is done by the real users.

3. Load scripts design and debug

When the testing scripts are created and approved by the customer, the QA performance engineer proceeds with scripts development.

As a rule, the development starts with recording the data exchange process between the server and the browser at the level of data communication protocols. The testing tools allow running the recorded process in several parallel threads for generating the necessary system load.

At first glance, the task seems quite simple. However, it is important to make sure that the recorded scripts are working properly. The correlation of the dynamic parameters sent to the server (such as authorization token) should also be set up.

The performance testing tools allow parametrizing the request itself (the header, cookie files, body parameters) in order to achieve uniqueness. Parametrization is done with the help of built-in functions, however, sometimes code writing might be necessary. The uniqueness of virtual user actions is reached with the help of functions performing loops, forking, and value randomization.

When developing scripts, the QA performance engineer should carefully compare the network traffic generated by the load scripts with the traffic from the browser of a real user. This can be done with the help of the sniffing tool Fiddler.

The structure and the size of requests and responses must be exactly the same. The key server responses should be checked for proper performance as the modern systems may respond to a user with a special error notification rather than an HTTP code when an error occurs. The performance testing tools allow us to set up criteria for checking server responses.

Besides the steps described above, a particular emphasis is also paid to the browser data cashing emulation and simulation of other features of the client’s work. This approach helps to apply a load to the real one as close as possible.

During the final stage of the scripts debugging, a series of preliminary tests with a low load is conducted. The measure is necessary to ensure that the scripts work correctly for various user accounts: dynamic parameters’ transmission, HTTP error codes, and request/response structure are checked.

Preliminary tests also allow finding the most suitable load pattern, for example, +1 virtual user every 3 seconds.

The above-described steps are often missed, and this leads to errors emerging during load scripts execution at the stage of final testing. As a result, additional time may be required to find and fix the defects.

Get a free consultation with the a1qa performance engineer.

4. Generating a realistic set of test data

Before launching the final tests, test data must be generated in the amount sufficient for correct scripts operation. This step should never be omitted as it may influence testing results. When a thousand virtual users performs actions from one account, the requests will be cashed on the server-side.

Moreover, there may be conflicts among virtual users performing similar actions. For example, when users add goods to the chart in an online shop, there is a chance that they will click the “Pay the order” button at the same time. This coincidence will cause additional errors. Furthermore, this approach will not provide realistic performance results, as only one account will always be updating in the database.

There is no universal approach to the tests’ data generation. As a rule, the most appropriate method is chosen after the system analysis. At the stage, the types and the structure of the test data are discussed with the development team. Both the QA performance engineer and the development team may execute the data generation process.

The test data can be classified into three main types:

- User accounts with various roles

Special attention is paid to the uniqueness of the virtual user names and their passwords that must correspond to the specified safety criteria. For convenience, user names are created with the help of established patterns, for example, all test users may have the last name “a1qa” and the first name “PerfTestUserX”, where X is a number from 1 to 1,000. The use of such a pattern helps identify users in the database.

Sometimes it is necessary to create users with profiles of different sizes. A “heavy” user has a lot of database entries and connections among them. A “light” user has relatively few entries. Performance testing of a billing system showed that the results for the “heavy” users were significantly worse.

User accounts can be created with the help of the user interface; through API calls made by automation scripts or by updating entries in the database directly.

- Import files of different types and sizes

User accounts need data to work with. Sometimes it is necessary to measure not only the handling time for requests but also to evaluate the load speed of the files. These values may be of the highest importance, for example, for enterprise software products.

Test data is organized in a format, which corresponds to a particular type of upload documents and has specific features: type (MS Word. Excel, PDF, JPEG, etc.), size, and content. Data generation may be executed by the Fsutil command entered the command line or with the help of PowerShell commands, which generate files of the necessary size and quality.

- Valid data for filling in forms

Web pages often contain forms to fill in. These forms consist of various fields that should be completed with personal data. The server processes the received data and, as a rule, saves it to the system database. For successful data processing, the validity requirements must be observed. For example, the entered data (e.g. phone or credit card number) should have an appropriate format. If the data is not valid, the server may return an error message.

Another approach for valid data generation is to develop code, which generates the values in the appropriate formats. The performance testing tools built-in functions for randomizing values can also be applied.

When the test data types and means of their generation have been identified, the QA performance engineer should forecast the amount of data sufficient for successful test completion and identify the most efficient way of clearing the database.

5. Choosing load scheme

The aim of the performance testing should also be determined before the start. The testing process may be conducted with the help of several test types. The schemes below show how the load is applied during various types of tests.

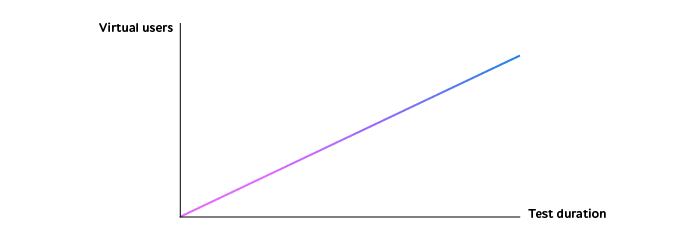

Scheme №1 – a continuous linear increase in the number of virtual users.

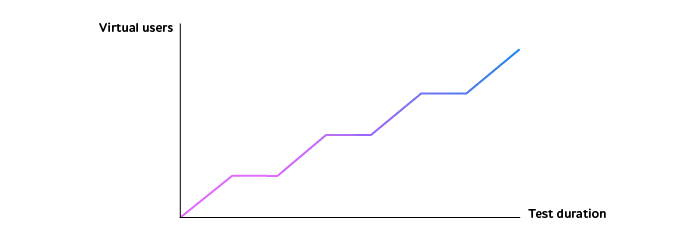

Scheme №2 – a step-like increase in the number of virtual users.

There are time spans when the number of users does not change.

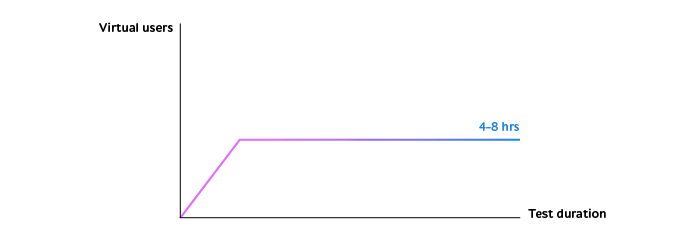

Scheme №3 – a linear increase in the number of users until a certain moment, after which the number of users remains the same for a long while.

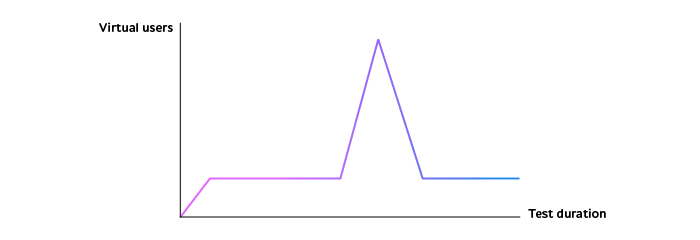

Scheme №4 – the number of users is maintained at an average level, as in the scheme №3, but there is a sudden load increase.

Scheme №5 – a custom change in the number of users, which can combine the schemes described above (№1-4).

As a must-have, we recommend conducting stress testing and load testing.

Stress tests help find the upper limit of the system performance (schemes №1 and 2). Load tests allow ensuring that the system performance does not decrease under an average load lasting for a long time span (scheme №3).

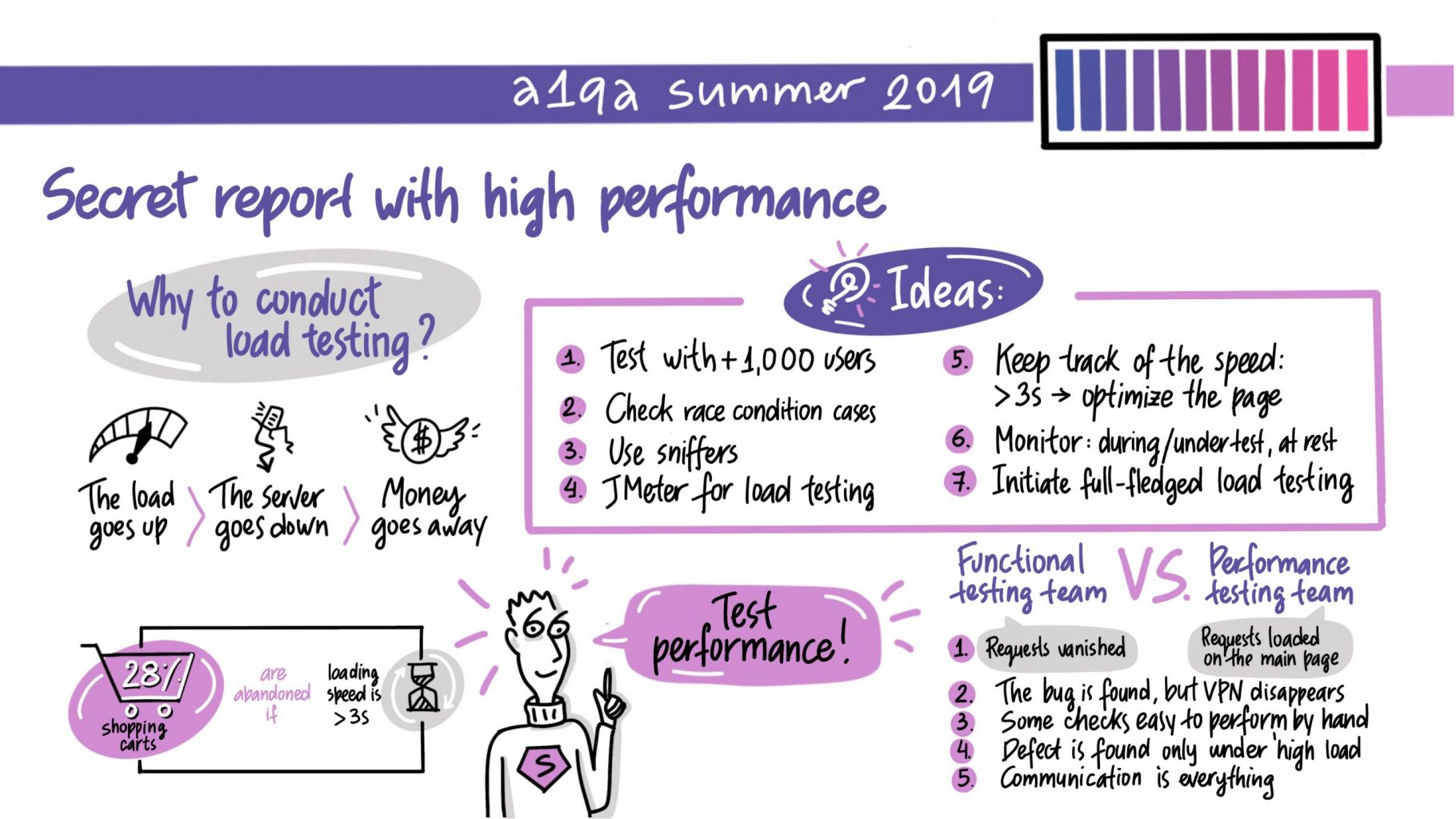

At the traditional a1qa summer professional conference, the QA specialists made a presentation partly devoted to the topic of load testing. See below the reasons for conducting this testing type and the ideas on how to do it right.

The choice of the loading scheme may be conditioned by the peculiarities of the system and the requirement for its performance. For example, the system of limited bonuses’ distribution on a large gaming portal is scheduled for a specific date. The owners of the portal are expecting an influx of users and, therefore, want to be sure that the system is capable to cope with an extreme load. The scheme №1, with an increase of 3,000 per 10 seconds, can be applied for testing of the system. On the contrary, corporate systems are used by approximately the same number of users but during the whole working day. The most suitable approach for this situation is to apply the loading scheme № 3 during 8-10 hours.

Another example to look at is online shops that are exposed to an influx of users during seasonal sales. The expected number of visitors for the next sale can be predicted with the help of the statistics on the normal period and the period of peak loads. High system stability during the peak loads may be a requirement for the performance of such a system. In this case, we recommend using the scheme №4 as an additional load scheme.

Request a free consultation with the a1qa experts.

6. Distributed load testing from multiple geographic locations

We recommend placing load generators as close to the testing environment as possible. This approach allows avoiding problems related to the network carrying capacity as the delays may vary from milliseconds to dozens of seconds. Placing the load generator in one local network with the testing environment ensures the fastest speed ration between the server and the client. Thus, the application performance is tested in the conditions close to the ideal.

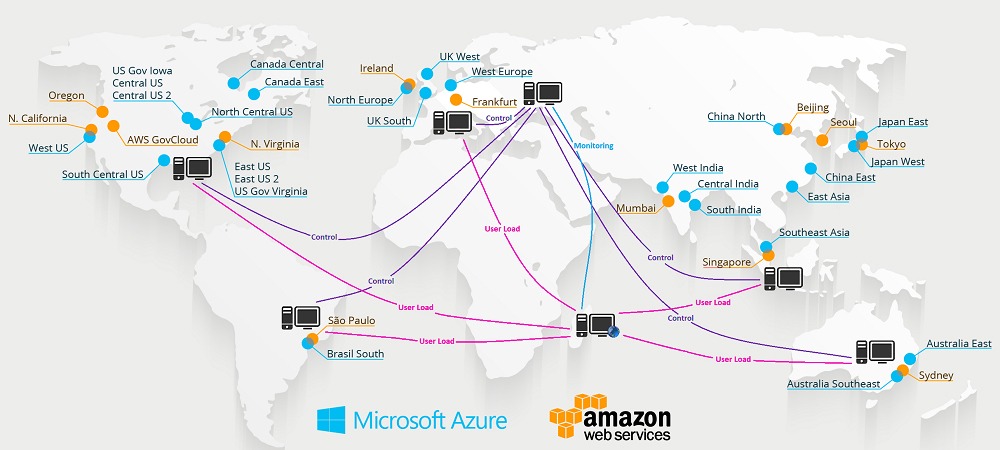

Sometimes the task is, on the contrary, to measure the key performance parameters by simulating the actions of the real users from remote regions. This is the case for a gaming news website visited by users from all over the world. In order to provide a realistic load, the geographical factor should be taken into consideration. The necessary information can be obtained from web analytics tools.

To accomplish this task, a distributed load test should be performed when virtual users are distributed among several load generators located in different geographical regions. Virtual machines rented from cloud services (Amazon WS, Microsoft Azure, Google Cloud, etc.) are used as load generators for this testing type.

During the test configuration, a client (master, test controller) and servers (slaves, test agents) are created in, for example, the US, Europe, Brazil, and Australia. A client is a machine collecting and showing the results, whereas servers are used as load generators. The load is applied from all servers simultaneously.

The testing results evaluate the system performance for the end users and the necessity to place additional servers in the regions with the worst performance results.

Now you have seen the basic rules of how to apply the load to the system. The result received with the help of the user behavior method is close to the actual state of the system performance.

An additional advantage of this approach is the possibility to find functional defects that appear only under the load. Scripts developed according to the actions of real users to help localize such defects.

Book a free consultation with the a1qa experts and find out how we can enhance the quality of your software product.