How DevOps is changing software testing

Once upon a time, software QA was done by teams taking small aspects of a piece of software and testing every conceivable variation. Times have changed and so has the software industry. We no longer think of software as being packaged in a box and bought off store shelves. Old-style massive full versions of software are no longer the norm; now most companies deliver software via the internet in small bursts. Unless there is to be a major change, the updates are just made and instantly applied without users having a clue.

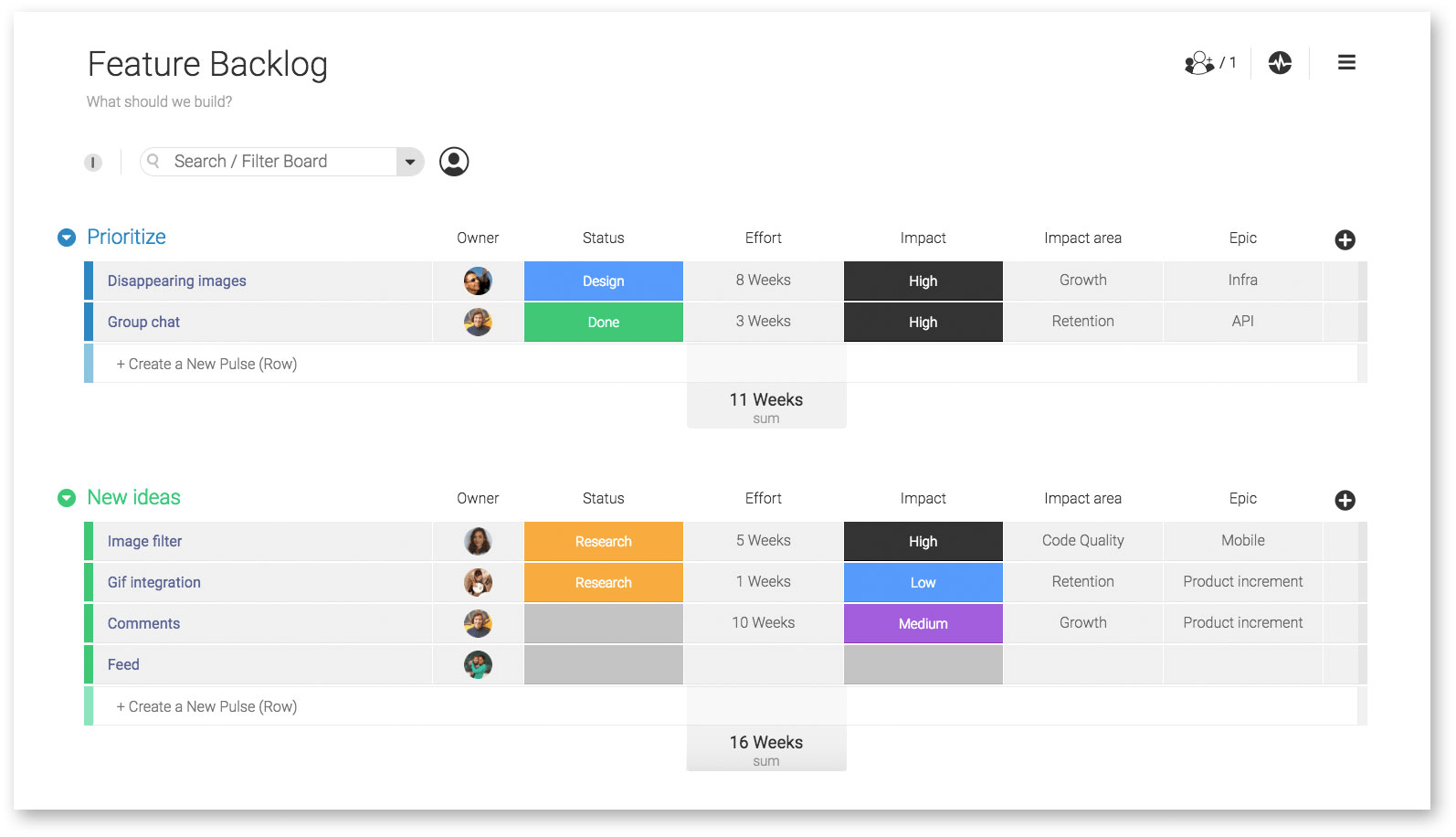

The move from gigantic disc-based software to these incremental on-the-fly updates required a great deal of thinking behind the scenes. First, the programmers became Agile and set themselves small achievable goals. They would spend a week, maybe two, working on adding some features or fixing a couple of bugs as in these images:

While the old mindset had developers creating software and handing it off to the operations team to maintain, the newer DevOps approach has those programmers working together with their operations team throughout the process.

The key is that now much of the company is both working and planning to get the product to an agreed-upon state. Instead of waiting for the programmers to push their possible final build to the testers after, those testers are now involved from the get-go.

Granularity

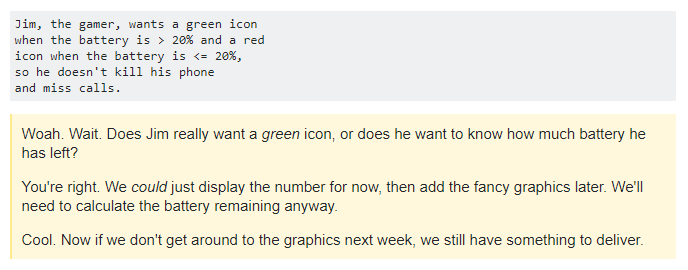

To make a build cycle take as little time as possible, its scope is recursively reduced. Here is an example of one of those tasks, or stories, being further refined:

This shows an attempt to break down and refine the goal into the smallest bites possible. These smaller and smaller bites make it easier to plan software testing. QA engineers can better estimate the time needed for testing and fixing any defects discovered.

Number of tests

When talking about testing in Agile environments, the team has to be able to trust that the code will perform as expected. That trust, or its lack, can be easily understood if you look at it in light of the number of tests. More testing means higher probability that your program will build and deploy successfully. Your confidence about the application will be much higher if it passes 295 out of 300 tests than if it passes two out of three.

Size of tests

The other side is the size of the tests you need to do. With large, multifaceted applications, it may be difficult to test the whole program after every build. In those cases, you test only the parts that are important or that have been changed. There is never a set-in-stone group of required tests, your teams can add or subtract as needed.

Frequency of tests

How often you test can depend on the types of tests and what resources they require. For an application that consists of a bunch of smaller programs, it is pretty straightforward to test just what has been changed. If, however, your program is huge, you may not be able to run all your tests after each section of code is committed. In that case, you run the subset of tests that you need to and then run your full test at night. Basically, if the team decides that a test needs to be run, its size or runtime will not matter. For a test that takes three days to run, you just automate it to run every three days at a certain time.

Automation and continuous delivery

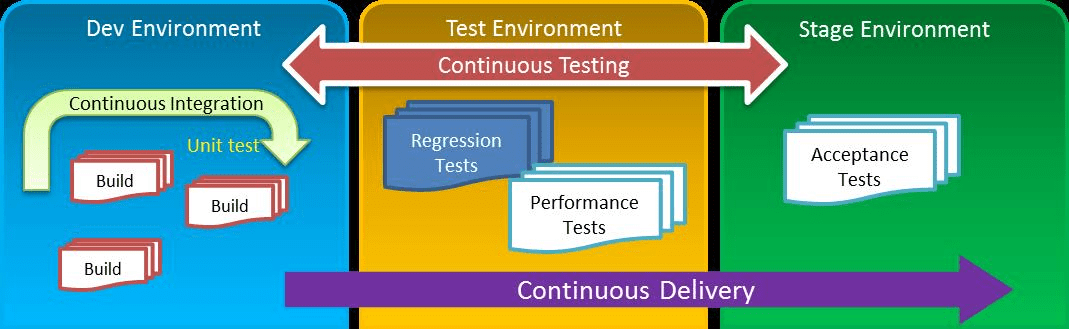

In the past, testing engineers focused only on one part of the software development cycle—testing the code and pushing it on to the programmers. Although with DevOps, that has changed—with continuous integration and delivery, testers are now required to take responsibility for quality throughout the entire development process, not just the old QA phase.

Building more tests into the software

In the world of DevOps, it is important to have regular deployments—the automation of processes can be a big help in making that happen. That said reduced deployment time and more frequent software releases mean more testing will need to be done. If there is no automation, large numbers of test cases must be run manually, which will slow down the whole process. Teams need to increase their testing and will have to automate more and more of it.

Automation for testing environments (virtualization)

A test environment is an internal setup of software and hardware that emulates the environments where the finished product would eventually deploy. The team can then test the software on virtual systems to catch defects before it rolls out to production. Cap Gemini’s included diagram shows several varieties of testing environments.

If testing environments are automated effectively, the amount of manual intervention will be greatly reduced. However, if the automation is done incorrectly, the QA team will have to fix test environments manually, which will ultimately increase the amount of manual work—not something you would expect from automation.

Effect on teams

In the old model, QA wasn’t in touch with the development and delivery processes. DevOps get them working together.

Increased communication and collaboration

In DevOps, QA engineers must actively collaborate with other departments. They attend planning sessions and communicate to the rest of the project team what will be tested next, when, how, and by whom. This may require the QA to step out of their comfort zones, but it will be necessary for effective work.

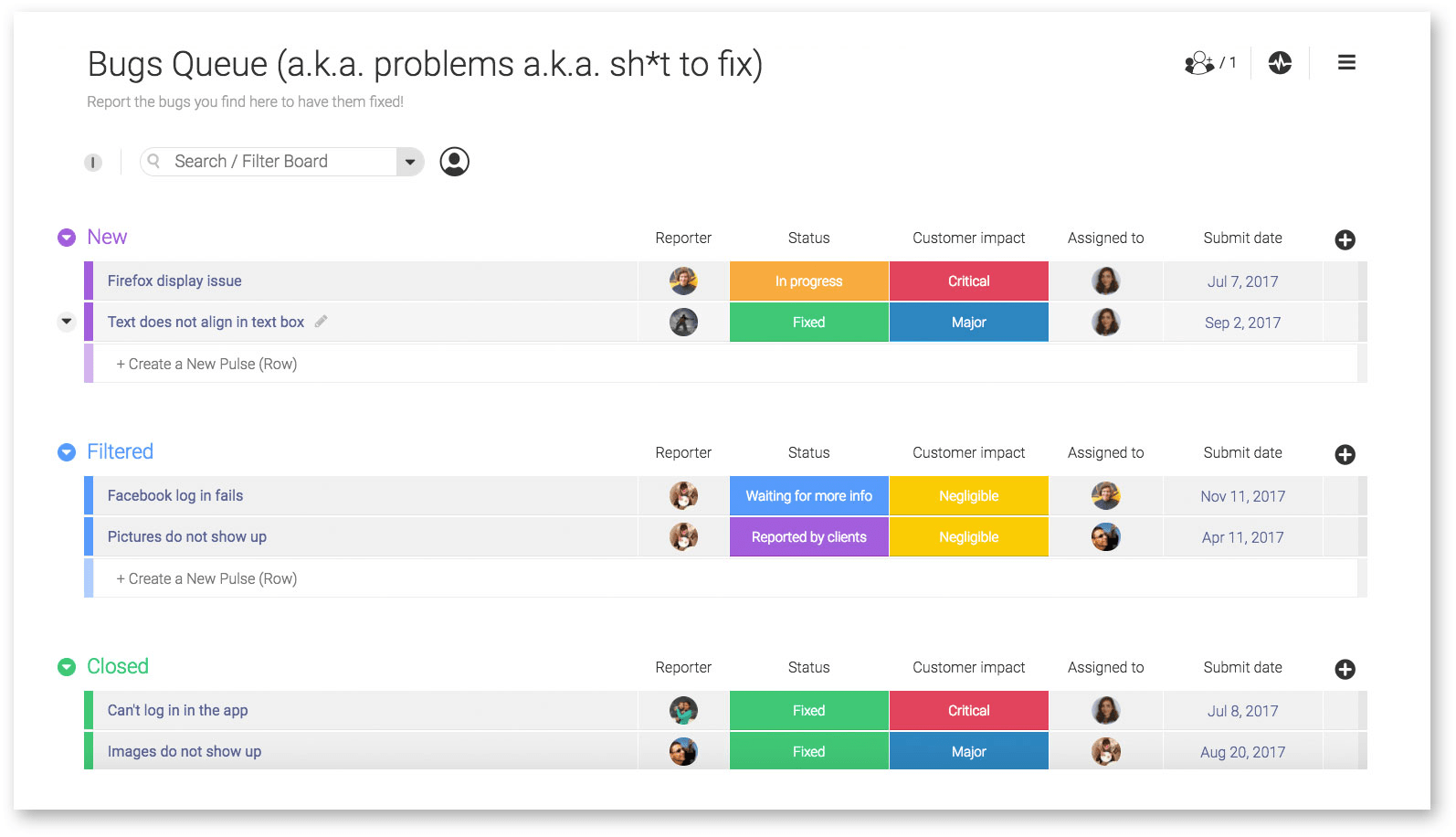

The right project management solution is critical to keeping all teams aligned by giving everyone clear visibility on the project’s progress between meetings.

Fail faster, fix faster

Coding is a practice that often requires extreme concentration. Getting back on track, or more specifically, getting back to where you were mentally when you added a bit of code can be very difficult.

For this and other reasons, many teams work on the Fail Faster, Fix Faster principle. Jim Shore said in his 2004 IEEE article on the subject that, “failing fast is a non-intuitive technique. Failing immediately and visibly… makes bugs easier to find and fix.” This method makes it very easy for an error to stay in context for the developer. They will not have to search for hours to fix it.

Some software is given the ability to compensate for errors. This can result in a bigger mystery fail. Fail fast suggests that the error should be allowed to happen right away so that it can be detected and fixed faster. These bugs appear sooner and don’t reach production, thus reducing costs.

Closing thoughts

The move to the DevOps methodology can help production teams bring software to their customers faster. In addition to having the developers work closely with the operations team, we now have the QA testers pulled into this collaborative effort. All three teams work and plan the steps for the full rollout—keeping in mind that this consists of lots of little rollouts. The QA team is now there in the thick of things automating and testing their little hearts out. No longer are they necessarily the last stop feeling left out in the cold or blamed for the product release being late.

Author: Steve Medeiros, a writer for TechnologyAdvice.com with an extensive background in technology, software, and customer support.