QA metrics for managers: defects and developers

In previous articles we discussed, how to define the quality rate, evaluate testers` capacity and covered bug description method. All these things concerned tester`s work, but a tester is the one who detects a bug, while a developer fixes it. So this time I would like to cover some bits of a DEVELOPER`s niche on the QA consulting map. Taking bug statics as a basis, I want to tell about the indexes that reflect developer`s work efficiency.

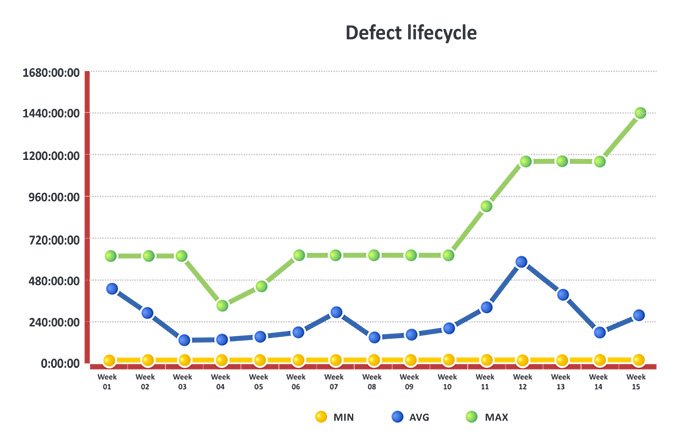

First one is about defect lifecycle continuity. The index comprises “defect processing period”, “defect fixing period” and some other elements.

When running these metrics pay special attention to the fact how long it took to fix the defect and which step was most intense. Remember to track the index changes and put them in to the diagram, like this one, for example.

Defect lifecycle longevity

The method of data collection resembles the one I described in the first article of QA metrics for managers, when told about defects having status “functions as designed”.

When you start calculating, you`ll see that index increases due to the defect lifecycle longevity. Considerable can be observed on stages like processing, assigning and queuing.

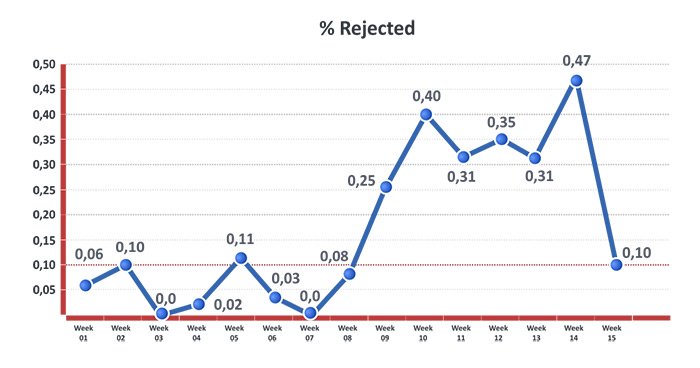

The other one index is the percentage of Rejected bugs. Defect is considered to be rejected, when a tester gives it status of a non-fixed one after checking. That means that a cycle has to be renewed: report to a developer, re-fix, re-check. The time is actually wasted for additional communication. On large scale projects such things cause additional expenditures, which is quite tangible.

Having exercised multiple projects, I noticed that 10% is an acceptable value for the index; try to keep to that limit. It is better to calculate the index after every release, especially on a large scale project, when more than two builds is released per week.

Remember to add the index in every report, to keep the tester and developer teams informed. Doing this you stimulate the team members to bring down the index percentage and define the positive work dynamics.