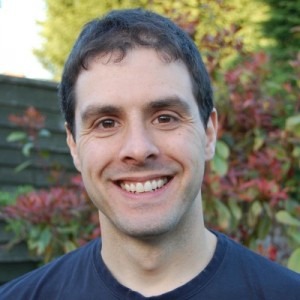

Interview with Adam Knight: Big Data exploratory testing

In the second part of the interview, Adam Knight speaks on the combination of exploratory testing and automated regression checking in testing Big Data systems. If you’ve missed the first part of the interview with Adam on recent changes in testing, you can find it here.

Adam Knight is a passionate tester eagerly contributing to the testing community. He is an active blogger and constantly presents at such testing events as Agile Testing Days, UKTMF and STC Meetups and EUROStar.

Adam, you specialize in testing Big Data software using an exploratory testing approach. Why do you find it necessary to do exploratory testing?

It is not so much to say that I find exploratory testing necessary. Rather I would say that I found it in my experience to be the most effective approach available to me in testing the business intelligence systems that I have.

My preferred approach for testing when working on such systems is pretty much:

- Perform a human assessment of the product or feature being created.

- At the same time automate checks around behavior relevant to that assessment to provide some confidence in that behavior.

- If at some point the checks indicate a different behavior than expected, then reassess.

- If you become aware that the product changes in a way that causes you to question the assessment, reassess.

I believe that exploratory testing is the most effective testing approach for rapidly performing a human assessment of a product, both initially but particularly in response to the discovery of unexpected behavior or identification of new risks, where you may not perhaps have a defined specification to work from, as per the last two points here.

The combination of exploratory testing and automated regression checking is a powerful combination in the testing Big Data systems, as well as many other types of software.

What peculiarities of exploratory testing can you mention?

That’s an interesting question. I’m not sure I would describe it as a peculiarity, however one characteristic of exploratory testing that I believe makes it most effective is the inherent process of learning within an exploratory approach. As I describe in response to the previous question, testing can often come in response to the identification of an unexpected behavior or newly identified risk.

The characteristic of exploratory approaches is that they will incrementally target testing activity around the areas of risk within a software development, thereby naturally focusing the effort of the tester where problems are most apparent. This helps to maximize the value of testing time, which is a valuable commodity in many development projects.

How can a tester find the right balance between exploratory and scripted testing?

It isn’t always up to the tester. I am a great believer in autonomy of individuals and teams and allowing people to find their own most effective ways of working. Many organizations, however, don’t adhere to this mentality and believe in dictating approaches as corporate standard.

Many testers I’ve spoken to or interviewed in such environments often perform their own explorations covertly in addition to the work required to adhere to the imposed standards.

For those who are in a position where they do have some control over the approach that they adopt then the sensible answer for me to this question is to experiment and iterate. I’d advocate an approach of experimentation and learning to find the right balance across your testing, whether scripted vs exploratory, manual vs automated or any other categorization you care to apply.

What are the main difficulties when it comes to Big Data testing?

The challenge of testing a Big Data product was one that I really relished, and in my latest role I’m still working with business intelligence and analytics. When I was researching the subject of Big Data one thing that became apparent to me was that Big Data is a popular phrase with no clear definition.

The best definition that I could establish was that it relates to quantities of data that are too large in volume to manage and manipulate in ways that were sufficiently established to be considered ‘traditional’.

The difficulty in testing is then embedded in the definition. Many of the problems that Big Data systems aim to solve are problems which present themselves in the testing of these systems.

Issues such as not having enough storage space to back up your test data, or not being able to manage the data on a single server, affect testing just as they do with production data systems.

Typically those responsible for testing huge data systems won’t have access to the capacity, or the time to test at production levels – some of the systems I worked on would take 8 high specification servers running flat out for 6 months to import enough data to reach production capacity.

We simply didn’t have the time to test that within Agile sprints. The approach that I and my teams had to adopt in these situations was to develop a deep understanding of how the system worked, and how it scaled.

Any system designed to tackle a big data problem will have in-built layers of scalability to work around the need to process all of the data in order to answer questions on it. If we understand these layers of scalability, whether they be metadata databases, indexes or file structures, then it is possible to gain confidence in the scalability of each without necessarily having to test the whole system at full production capacity each time.

So Big Data testing is all about understanding and being surgical with your testing, taking a brute force approach to performance and scale testing on that kind of system is not an option.

Thanks for sharing your viewpoint with us.

If you want to learn more from Adam, visit his blog a-sisyphean-task.com.